Title Determining if the Students Learned what We Expected – Assessing OutcomesExcerpt: To ensure that we are adding value in learning, and acting as a learning centric institution, we must be willing to determine if students are achieving our learning goals and outcomes – adjusting to improve success rates where possible. To accomplish this, we must convert our intuitive sense of accomplishment to explicit definitions and measures of learning. By studying this article and its linked resources, and by using the associated shared files, you will be able to: • Define common terms used in the assessment of student learning. • Define a useful scale to determine the student’s level of achievement of a learning outcome. • Analyze and disaggregate tests, projects, papers, etc., and correlate their components with course learning outcomes. • Evaluate the impact of course changes on learning outcome achievements. • Explain the relationships between program learning outcomes, course learning outcomes, and course structure and progression. • Calculate program learning outcome achievements from course learning outcome achievements or by using key assessments at points within the curriculum. • Describe how learning outcomes can be assessed for programs such as General / Liberal Education, Honors, and for extracurricular learning. • Compare and evaluate external tools for assessing general education learning outcomes. • Formulate standards of success for a program, evaluate its status, and set goals. • Describe the relationship between goals and outcomes, and how outcome assessment data could be used to analyze progress toward goal achievement at the department, college/school, and institutional levels. |

||||

| Author(s): Graham Glynn | Date last modified: June 8, 2019 | |||

| Categories: Chief Academic Affairs Office Staff, Dean’s Office Staff (Deans, Executive Deans, Associate/Assistant Deans, etc.), Department Chair Office Staff (Chairs, Assistant Chairs, Program Directors, etc.), Office Director’s Staff (Director, Assoc./Assist. Director, Coordinator, etc.) | ||||

| Tags: Assessment, Capstone Assessment, Closing the Loop, College/School Learning Goals, Continuous Quality Improvement, Coures Learning Outcomes, Department Learning Goals, Direct Assessment, General Education, Honors Program, Indirect Assessment, Institutional Learning Goals, Learning Outcome Achievement Level, Liberal Education, Program Learning Outcomes, Value Added | ||||

Introduction

To do this, we must first address how learning outcome achievement can be quantified and measured, and then determine how we might use this, or capstone assessment data, to determine achievement rates for program and institutional learning outcomes. This includes credential programs, the Liberal/General Education Program, Honor’s Programs, and even extracurricular learning outcomes. Once weaknesses are identified and treatments executed, we must determine if they were effective and should result in permanent changes to courses and programs.

Defining and Assigning Achievement Levels for Learning OutcomesThe article Defining What, Where, and When We Want Students to Learn describes the process of defining learning from the institutional level down to the course level. When measuring the achievement of learning outcomes, it is common to see three measures defined – beginning, approaching and met/mastered. However, defining five levels (described in Table 1) that align with the A thru F grading system creates a parallelism that makes it easier for faculty to assign the achievement level – faculty know the performance expectations for an A student for example.

Defining the nomenclature used at an institution can be a fun discussion. What matters most is not the precise terms used, but that a consistent set of terms and definitions be adopted by all departments. If this is not accomplished it becomes very difficult to produce coherent assessment reports for accrediting bodies, etc. A challenge here is that different accrediting bodies may each require a specific nomenclature which can cause problems when writing a report for institutional accreditation. Note that Mastery is not given a grade in Table 1 as it is reserved for achievement beyond the expectation of the course. Achievement at this level leads to an interesting conundrum. Ideally, a student receives an A grade at the completion of a course by achieving proficiency in all the CLOs, ensuring that they are well rounded and there are no holes in their education. This is particularly important if the course is a pre-requisite for others. If a student is rewarded in the grading process for achieving a CLO at a level beyond proficient, given bonus points for example, we are essentially enabling them to have deficiencies in some CLOs and compensate for this by mastery in others. They could therefore potentially get an A for the course while having deficiencies in some of the CLOs. This is not ideal, so we must provide other mechanisms, outside of grading, to reward the student who exceeds the proficient level in some CLOs. This is addressed in the article on Evaluating and Rewarding Student Learning.

From these parameters an outcome assessment rubric, such as shown in Table 3 for CLO #1, can be used to assign the achievement level for every CLO for each student.

Collecting data on CLOs enables the instructor to determine the areas of strong and weak achievement, and make and implement decisions about course design, learning activities, pedagogical approach, etc., to try to improve the performance levels. Once changes have been made, data on the new achievement rates should be used to determine if the treatment has had a positive impact, and whether to make the changes permanent. This process should be repeated regularly so the quality of the course is continuously improving. Aggregating these individual achievement rates to produce average achievement rates on a learning outcome for all the students in the course, as shown by the illustrative histogram in Figure 2, provides direct evidence of learning achievement. For example, a course redesign might result in the right shift in the achievement levels for Outcome 1 as show in Figure 2. This would be considered a very positive impact and would support keeping the new course design. It would also be considered a great example of data supporting “closing the assessment loop”. Similar graphs should be generated for each of the CLOs to get a complete picture of the impact of the course change.

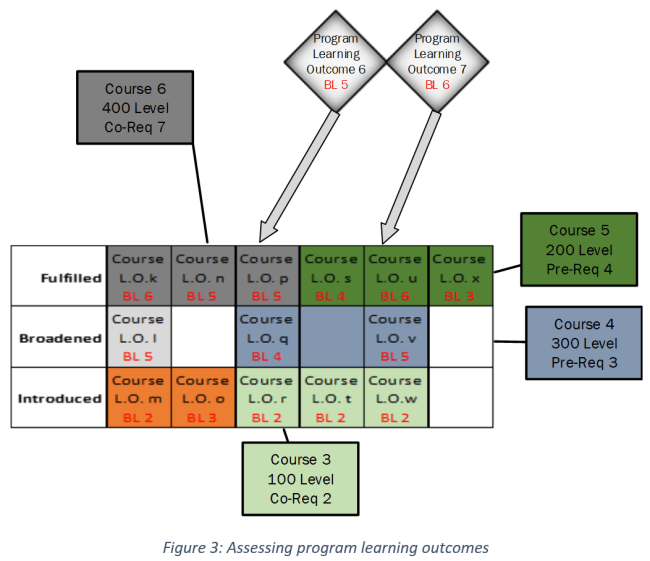

The intent of this article is not to provide a comprehensive list of direct and indirect measures of student learning achievement, as this has been addressed comprehensively in other publications. In essence, direct measures of student learning achievement require a strong correlation between the evaluative process/tool and the learning outcome. The evaluator should be making an explicit decision about the level of achievement of each learning outcome for each student observed. Indirect measures compound the results, or are not designed to correlate directly with the learning outcomes, and are therefore less useful though still valid. A survey of employers of recent graduates competencies would be an example of an indirect measure. Determining Learning Outcome Achievement at The Program LevelIf the learning outcomes in a course are derived from a curriculum mapping process similar to that described in Defining What, Where, and When We Want Students to Learn, then the level of program learning outcome (PLO) achievement is directly dependent on the achievement levels of its mapped CLOs. Figure 3 shows in each of its columns, the progression of courses, and their associated outcomes, that contribute to each PLO. Every cell within the column produces an outcome achievement level for its associated PLO. For example, PLO 6 (with a Blooms Level BL of 5) at the top of the diagram, is fulfilled by CLO P of Course 6 (the gray boxes), broadened in CLO Q in course 4, and introduced by CLO R in Course 3. As a result, the student moves up the Bloom’s ladder as they progress through the curriculum, moving through courses at level 100, 300, and finally 400. Determining the Student’s Level of Achievement of a Program Learning OutcomeAs with CLOs, it is helpful, and perhaps even more important, to know whether the students are achieving novice, emerging, developing, or proficiency on the PLOs. There are two basic ways in which this can be determined. The first, and perhaps the simpler of the two, is to administer some form of capstone examination or project designed to assess the PLO directly. These are usually administered in senior courses within the major. The second approach is to use CLO assessment data to determine the level the at which the PLO was achieved. CLOs that are written to introduce or broaden a PLO are likely to be written very differently than the PLO itself, mainly because they are addressing lower levels of Bloom’s taxonomy. However, the CLO that is designed to fulfill a PLO could be the exact duplicate of the PLO, since at this point, the student who is probably in an upper level course, has built from the basics and is ready to tackle activities such as synthesis. (Remember, the PLO is written using the highest level of Bloom’s verb appropriate for the credential). This does not always have to be the case however, as the PLO could be fulfilled by the amalgamation of the introductory, broadening, and fulling CLOs. In the first case, the level of achievement of the fulfilling CLO is simply assigned to the PLO. In the second case, the faculty will have to decide how to calculate the achievement level from the CLO achievement levels. Figure 4 shows learning outcome achievements for a single student. The top grid lists three of the PLOs on its vertical axis. The student’s (John Doe) achievement level of each is indicated by checking one of the horizontal cells. The second grid down shows John’s achievement level on each of the CLOs associated with PLO 7. The bottom grid does the same for PLO 6. The arrows on the left side show the relationship between each PLO and the CLOs that serve it.

There are a several ways that the performance levels for John’s PLOs 6 and 7 in the top grid can be calculated. The faculty member could develop a formula that converts the performance data for CLOs U, V and W to an achievement level for PLO 7. Alternately, since W and V are actually building John’s knowledge and skills so he can fulfill the outcome in U, one could just use the U performance level to assign a performance level to PLO 7 as shown by the arrows on the right of the figure. For PLO 6, since the fulfilling CLO P was achieved at a proficient level the PLO is assigned the same level. This assumes that, even though John only achieved the developing level on the dependent Q and W CLOs, he demonstrated that he overcame these deficiencies by achieving the proficient level for P. Once course tests and assignments have been disaggregated and correlated with learning outcomes as shown in Figure 1 above, some additional interesting program wide analysis can be performed. At Saint John Fisher College Wegman’s School of Pharmacy for example (1)Personal communication, each question on every quiz, test, and exam in every course is coded for its Bloom’s Taxonomy level, accreditation standard and learning outcome. This enables the college to do a cross course, cross year, longitudinal report on how each student is performing in each biological subsystem as shown in Table 4.

As can be seen from the data in Table 4 above, Student C is having performance issues in the renal subsystem, when compared to the Group Average. This data comes from 28 questions given in 7 different exams across multiple courses. This learning deficiency may not have been obvious to the instructors of the individual courses. Should a Pharmacist graduate with poor knowledge about the renal system? Many health profession’s programs have moved to a mastery approach, requiring achievement of all the PLOs for a student to graduate.

Indirect Measures of Program Outcome Achievement

Reporting Mechanisms for Learning Outcome AchievementThe easiest mechanism for collecting outcome achievement data is to have faculty report it as they submit grade information at the end of each term. If the learning outcomes are listed for each course in the Student Information System, it should be relatively easy to configure a table in which the achievement levels of each student can be entered by checking a box. This data could then be rolled up to provide achievement rates for programmatic outcomes. Determining Learning Outcome Achievement Within the Liberal/General Education ProgramThe Liberal/General Education program (L/GE) is usually a substantial part of all undergraduate credentials, and to a lesser extent, graduate programs. Its learning outcomes usually embody most of the institutional learning goals. The courses are delivered by multiple college/schools and programs which can make coordination of the L/GE program, and its assessment, challenging. The L/GE program, like all other programs at the institution, should have clearly defined goals and outcomes. This program can therefore create a curriculum map to determine which courses and associated CLOs serve the PLOs. Achievement levels on these CLOs can therefore be rolled up to generate achievement data for the L/GE program. In addition, many of the learning outcomes are replicated in multiple courses delivered by multiple programs. It is therefore advantageous to assess Liberal/General Education outcomes either independently of the courses, or in special courses Since the Liberal/General Education program is intended to provide the learner with foundation skills for success in the major (critical thinking, information literacy, etc.), an indirect but valuable assessment approach can be to survey instructors of courses that are initial courses in major about how well the students are prepared in these areas. This could be incorporated into each program’s cyclical program review / self-study process. Institutional accreditation agencies take continuous quality improvement of the L/GE program very seriously and provide resources to assist with the process. The Western Association of Schools and Colleges (WASC) Accreditation Commission has, for example, developed a rubric for the assessment of the General Education program, and the seven regional accrediting agencies within the U.S. have together developed a guide for effective assessment of student achievement. Determining Learning Outcome Achievement in The Honors ProgramSince the Honor’s Program is based on courses that have additional learning outcomes, or outcomes written at a higher Bloom’s taxonomy level than non-honors courses, these learning achievements can be assessed, and treatments implemented in the same way as any other program. Determining Extracurricular Learning Outcome AchievementExtracurricular learning outcomes are in many respects like CLOs. They are stated in the same way and can be measured using similar rating scales. However, since operational areas such as Student Affairs rarely give formal assignments or tests to students, the achievement of extracurricular learning outcomes are often measured in less direct ways. Student employees can be assessed by supervisors, student organizational leaders by faculty and staff advisors, athletes by coaches, etc. Rubrics can be used to record assessments based on direct observation of behavior, etc. What Can PLOs Tell Us About a Program?Traditionally a student graduates when they pass all the courses that are a part of a prescribed curriculum. They could accomplish this with a 2.0 GPA but would be missing a great deal of education compared to a high achieving student. The top grid in Figure 4 represents achievement of three PLOs but would in reality list all of them. You can imagine that each student’s performance levels would be distributed across the grid in a unique pattern. Similarly, when all the individual graduates’ data is averaged together, a similar grid is produced representing the average achievement of a graduating class on the all the PLOs (in addition to the average a distribution of achievement levels for each PLO similar to Figure 2 might also be worth examining). This is the ultimate data which must be discussed by the faculty in the program. If they are dissatisfied by the achievement rate on any PLO, they can determine its root cause by drilling down to the individual CLO achievement rates if necessary, and then implement strategies to remedy any problems found. Note that a disadvantage of assessing PLOs using capstone projects, etc., is that it is harder to identify the location of the root cause leading to an issue. With assessment of CLOs this is much easier to achieve. Assuming a program has ten learning outcomes – in how many of these does a student need to be proficient to be considered a worthy graduate of the program? All? Perhaps they could be proficient in seven and be developing in three? Are some more critical than others and should these be designated as “core” and require proficiency for graduation? For which PLOs would it be acceptable to perform at a lower level? The answers to these questions need to be determined by the faculty instructing in the program and used to implement appropriate policies. How many of our departments have ever had this conversation? Determining Achievement of College/School and Institutional Learning GoalsGoals are meant to be broad and non-specific and as such not directly measurable. Progress on goals is reflected by achievement of the underlying learning outcomes. Assessment of goals is therefore usually based on a narrative containing a somewhat subjective analysis of the learning outcomes achievement. Similarly, proposals aimed at improving goal achievement are usually based on strategies that improve achievement of those underlying outcomes. Measuring Program Goal AchievementsFormal reports on goals are rarely required. Accrediting bodies focus nearly exclusively on PLOs. However, it may be nice to have a general sense how the institution is doing on them. By a similar “roll-up” process to that shown in Figure 4, the program goal achievement rates can be generated from the PLO achievement data. This will require some creative interpretation since, by their nature, goals are generally nebulous and not easy to quantify. However, a cogent argument based on the PLO achievement rates should serve to justify any conclusions. Putting it All Together

Assessment is a continuous process which must evolve as our programs and the demands on them do. To ensure that departments are giving it the attention it needs, it is a good practice to schedule an assessment day, ideally at the end of each semester, but at least yearly. This time should be reserved for faculty within each program to sit down as a group, discuss assessment data, plan assessment data gathering, propose and approve curricular changes, etc. References

|

||||

![]() Determining if the Students Learned what We Expected – Assessing Outcomes by Graham Glynn is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.

Determining if the Students Learned what We Expected – Assessing Outcomes by Graham Glynn is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.

It is within courses that the majority of learning occurs, and they are therefore the best place in which to assess it. The faculty instructing our courses are most qualified to determine the degree to which students achieve learning outcomes. If you ask most faculty if they are assessing student learning they will indicate they are – after all, they give exams and assign grades! However, if you restructure the question and ask, “Do you know the extent to which each student in your course achieved each course learning outcome (CLO)?” you would most likely get a very different and less confident answer. Yes – faculty do have an intuitive sense of whether the students are “getting it” based on their interactions with students in the learning environment and from grades. However, using this intuitive sense it would be difficult to quantify how successful the learning achievements are, and whether for example, a change in the course design had a big impact on student learning.

It is within courses that the majority of learning occurs, and they are therefore the best place in which to assess it. The faculty instructing our courses are most qualified to determine the degree to which students achieve learning outcomes. If you ask most faculty if they are assessing student learning they will indicate they are – after all, they give exams and assign grades! However, if you restructure the question and ask, “Do you know the extent to which each student in your course achieved each course learning outcome (CLO)?” you would most likely get a very different and less confident answer. Yes – faculty do have an intuitive sense of whether the students are “getting it” based on their interactions with students in the learning environment and from grades. However, using this intuitive sense it would be difficult to quantify how successful the learning achievements are, and whether for example, a change in the course design had a big impact on student learning.

Table 1 does not imply that grades are directly correlated with levels of outcome achievement, although this is the ideal. A grade usually derives from a complex set of tests, class participation, assignments, etc., which evaluate achievement of multiple learning outcomes, often simultaneously. We must therefore disaggregate these activities into the components that align with the CLOs before we can determine outcome competency levels, as shown in Figure 1. Assuming a tight correlation between a question and a CLO, the performance on each individual test question may provide some information on how each student is doing on every CLO. Similarly, a component of a paper or other assignment could also provide data on an individual outcome achievement. To measure this, the instructor has to clearly disaggregate the assignment into parts associated with the CLOs and assign an achievement level for each. This is usually accomplished using a grading rubric for the assignment. The achievement level could therefore be determined by a set of parameters such as shown in Table 2 and illustrated in Figure 1.

Table 1 does not imply that grades are directly correlated with levels of outcome achievement, although this is the ideal. A grade usually derives from a complex set of tests, class participation, assignments, etc., which evaluate achievement of multiple learning outcomes, often simultaneously. We must therefore disaggregate these activities into the components that align with the CLOs before we can determine outcome competency levels, as shown in Figure 1. Assuming a tight correlation between a question and a CLO, the performance on each individual test question may provide some information on how each student is doing on every CLO. Similarly, a component of a paper or other assignment could also provide data on an individual outcome achievement. To measure this, the instructor has to clearly disaggregate the assignment into parts associated with the CLOs and assign an achievement level for each. This is usually accomplished using a grading rubric for the assignment. The achievement level could therefore be determined by a set of parameters such as shown in Table 2 and illustrated in Figure 1.

For the reasons outlined above, grades are generally considered an indirect and gross indicator of learning achievement and are therefore not considered reliable for determining the impact of a treatment (e.g., introduction of a new lab assignment) on CLOs. There is an exception to this rule however. If all the test and exam questions were designed with a tight correlation with CLOs, and all assignments were evaluated with rubrics that break down their expectations and also align them with the outcomes, then there should be a good correlation between grades and student learning achievement within the course. However, the grade would still be an aggregated measure of the learning achievement and the instructor would have to look at the performance on each question and at each assignment expectation to determine the achievement levels for CLOs, i.e. a horizontal (Table 2 rows) rather than a vertical (Table 2 columns) integration process.

For the reasons outlined above, grades are generally considered an indirect and gross indicator of learning achievement and are therefore not considered reliable for determining the impact of a treatment (e.g., introduction of a new lab assignment) on CLOs. There is an exception to this rule however. If all the test and exam questions were designed with a tight correlation with CLOs, and all assignments were evaluated with rubrics that break down their expectations and also align them with the outcomes, then there should be a good correlation between grades and student learning achievement within the course. However, the grade would still be an aggregated measure of the learning achievement and the instructor would have to look at the performance on each question and at each assignment expectation to determine the achievement levels for CLOs, i.e. a horizontal (Table 2 rows) rather than a vertical (Table 2 columns) integration process.

Unfortunately, not all programs can have a curriculum map that tightly correlates PLOs and CLOs. When such a map does not exist,

Unfortunately, not all programs can have a curriculum map that tightly correlates PLOs and CLOs. When such a map does not exist,  So far direct measures of student learning have been considered. These are explicit decisions about learning outcome achievement levels based on observed evidence of student learning. Another important, though indirect measure of learning outcome achievement, is feedback from employers and graduate programs that accept the program graduates. This feedback can be most directly applied to program improvement if it is based on an assessment of the goals and outcomes, their appropriateness, completeness, and how well they are preparing the students for their post-graduate endeavors.

So far direct measures of student learning have been considered. These are explicit decisions about learning outcome achievement levels based on observed evidence of student learning. Another important, though indirect measure of learning outcome achievement, is feedback from employers and graduate programs that accept the program graduates. This feedback can be most directly applied to program improvement if it is based on an assessment of the goals and outcomes, their appropriateness, completeness, and how well they are preparing the students for their post-graduate endeavors. Ideally, for chairs, deans, the Provost, and the President to have confidence in the program assessment data and use it for decision making, they should be able to drill down from goal achievement statements, through PLO data, and finally to the course outcome data and the evidence on which it was based. One of the most powerful mechanisms for doing this is to store the artifacts that represent student achievements (tests, assignments, art work, etc.) in an electronic portfolio (ePortfolio), along with the rubrics used to assign achievement levels to the CLOs, and their resultant scores. While these may seldom be visited by anyone other than the program faculty, their availability provides evidence to the administration, program reviewers, and accreditation agencies, that the process has integrity. ePortfolio capabilities are addressed more completely in

Ideally, for chairs, deans, the Provost, and the President to have confidence in the program assessment data and use it for decision making, they should be able to drill down from goal achievement statements, through PLO data, and finally to the course outcome data and the evidence on which it was based. One of the most powerful mechanisms for doing this is to store the artifacts that represent student achievements (tests, assignments, art work, etc.) in an electronic portfolio (ePortfolio), along with the rubrics used to assign achievement levels to the CLOs, and their resultant scores. While these may seldom be visited by anyone other than the program faculty, their availability provides evidence to the administration, program reviewers, and accreditation agencies, that the process has integrity. ePortfolio capabilities are addressed more completely in

Comment on “Determining if the Students Learned what We Expected – Assessing Outcomes”